Why No-Code Doesn't Mean No Code

By James Virgo

18th April 2024

Introduction

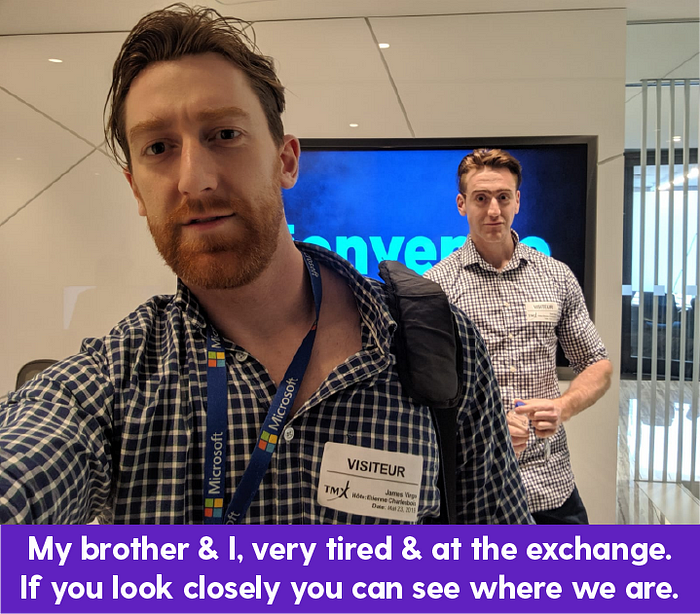

In 2019 our startup development studio was building a real-time analytics technology for the largest stock exchange in Canada. The requirements of the project were to analyse 100+ million records in real-time everyday & run 56 simultaneous real-time processes, to analyse the data. We also needed to build a nice looking user interface that would allow end users to load & visualize these big data sets without any slowness.

Building a system like that from scratch is quite a challenge. But the difficulty level was increased beyond what was reasonable when the stock exchange that was hosting the system commissioned a server that was nowhere near powerful enough to handle the data requirements.

Although we routinely asked for the server power to be increased, the business side of the organization told us that it was important that we write “efficient code”. They explained that they had strict budget requirements themselves. This forced us to do a lot of research & development into how we could process all that data on a server that was a little more powerful than my old trusty Dell laptop.

After two years of R&D & hundreds of thousands of $$$ invested, our small team of resourceful developers finally figured out how to process all that data on a server that cost only $400 per month to run. Yes… that is right… we managed to process all the data from three major Canadian stock markets including real-time backups & analytics, on a server that came to a total of $400 per month.

The way our small team approached R&D was to study different open source databases at a very low level & to play with their configurations. We had a couple of superstars who during this process of continual iteration, would essentially focus on three main things that needed to be optimized.

The first of these things was to put data into the database, the second one was to take data back out of the database & the third was to compress the data that we were storing.

Fear No-Code

One thing I have learnt working with big data is that big data really highlights inefficient programming. If you work with small data, you might not care much about efficiency. A one second delay when getting 100 rows of data out of your database is fine. But now multiply that one second delay by 1 million rows of data. Suddenly the one second delay is over 200 hours or just over two working weeks. If your user is waiting for over 200 hours on each click… your program might work, but no one would ever know, because no one is ever going to sit through 200 hours of wait time.

As hardcore technology enthusiasts, our development team did not even consider a no-code app builder as a possible solution to our big data problem. We knew that these tools wouldn’t be able to accommodate our stringent requirements. In addition, we also knew that these tools would not allow us to own our intellectual property.

I have always been fearful of no-code tools because, for the most part, no-code locks you into an annual subscription model where, as an enterprise, you are required to fork over hundreds of thousands of dollars every year. Then, when all is said and done, you are not allowed to extract your code & to own your intellectual property.

In our case, I was also afraid that we would be locked into the features that the no-code product supported. If we needed to add a new feature, we could use the no-code APIs. But then we would just have to hope that behind the scenes the no-code would be handling the data efficiently so that the end solution would work as expected. In the case of these massive data sets, this was a huge risk.

To add to my trepidation as an adopter of no-code, our studio often has to work with businesses that come to our development team for help with their no-code. We had seen people layering features on top of features within their no-code products which gradually slowed down over time. Sometimes these people had already done 80% of their development, paid the no code platform, paid no-code developers… but had then had not been able to finish their products because the no-code simply lacked the necessary features for them to complete their solutions.

Lastly, there was also the problem of data security. How could we comfortably control the security requirements that were in the contracts we had signed with the stock exchange if we didn’t know exactly how the systems had been built.

For all of these reasons, we felt like we loved the idea of no-code — fast to market, quick to prototype etc. — but we were also fearful of it.

Love No-Coding

n 2019 we had cracked the case. Our team had finally figured out how to process these massive data sets for our stock exchange client. We could now handle massive data sets efficiently on very low cost infrastructure.

Now we knew how to process massive data sets efficiently & our team was suddenly contacted by a slew of different companies wanting us to come & build them real-time, big data analytics software. These businesses ranged from healthcare, food safety, right through to more capital markets software.

With the solution in our back pocket you might think that this was easy money. But, even if we had been legally allowed to just take the solution that we had built for one company & just give the exact same solution to another company, it is not possible to do that in practice. Each system requires some bespoke touches, in data representation, analytics or storage. So we would still have to do the work, to deliver a new system.

Our development team has always used automatic code generators to help speed up our work. We use auto code generators in conjunction with libraries of code that we keep for internal use. We do this because of the simple reason that no one likes typing out lines & lines of code that they’ve already written 100 times before. It is boring & not what programming is supposed to be about.

As we built more systems, a few members of the team saw that there were patterns that were beginning to emerge. Gradually we saw that our libraries of code generators were becoming more & more complete.

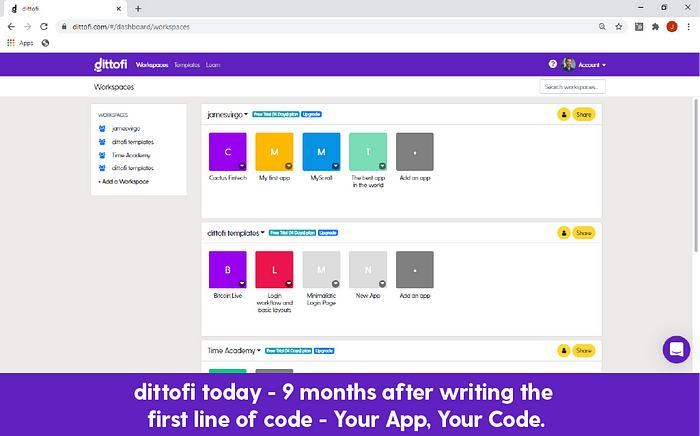

In 2020, our knowledge in the area of auto code generation had reached a point where we decided it would be beneficial to wrap up our 10+ years of development knowledge into an automatic code generator built in the style of a no-code tool.

The objective was to give users who wanted a full stack, no-code solution access to world class, human readable code that they could own. We wanted everyone to be able to generate & own code that looked exactly like the systems that we had been building for our clients.

The importance of giving users control over their code was that we didn’t want to ever trap users on our platform, paying yearly subscription fees with no hope of ever owning their IP. We wanted to give everyone the opportunity to own their code. Because, even if you never want your code, you never know when you’re going to need it.

On a business level we put our product into a corporation in April 2020. We launched in January 2021 & now have over 40+ users of our platform automatically generating & owning their own, full stack, scalable computer code.

Right now everything is in beta & 100% for free. If you’d like to try it out, you can do so at www.dittofi.com. We will not leave it all for free for much longer, as we need to start making some money so that we can keep researching, developing & building more of this kind of world class software for the masses.

Our mission is to remove the divide that has emerged between code & no-code & to make high quality software & code available for all.